Your microservices are deployed. Pods are healthy. Liveness probes are passing. Your service mesh dashboard is mostly green.

Then a single gRPC method starts returning RESOURCE_EXHAUSTED to one upstream caller, but only when the request payload exceeds 4MB. Or a streaming RPC quietly stops sending messages while the connection stays open. Or one client hits a stale binding to a pod that's already been replaced and gets UNAVAILABLE for two minutes before reconnecting.

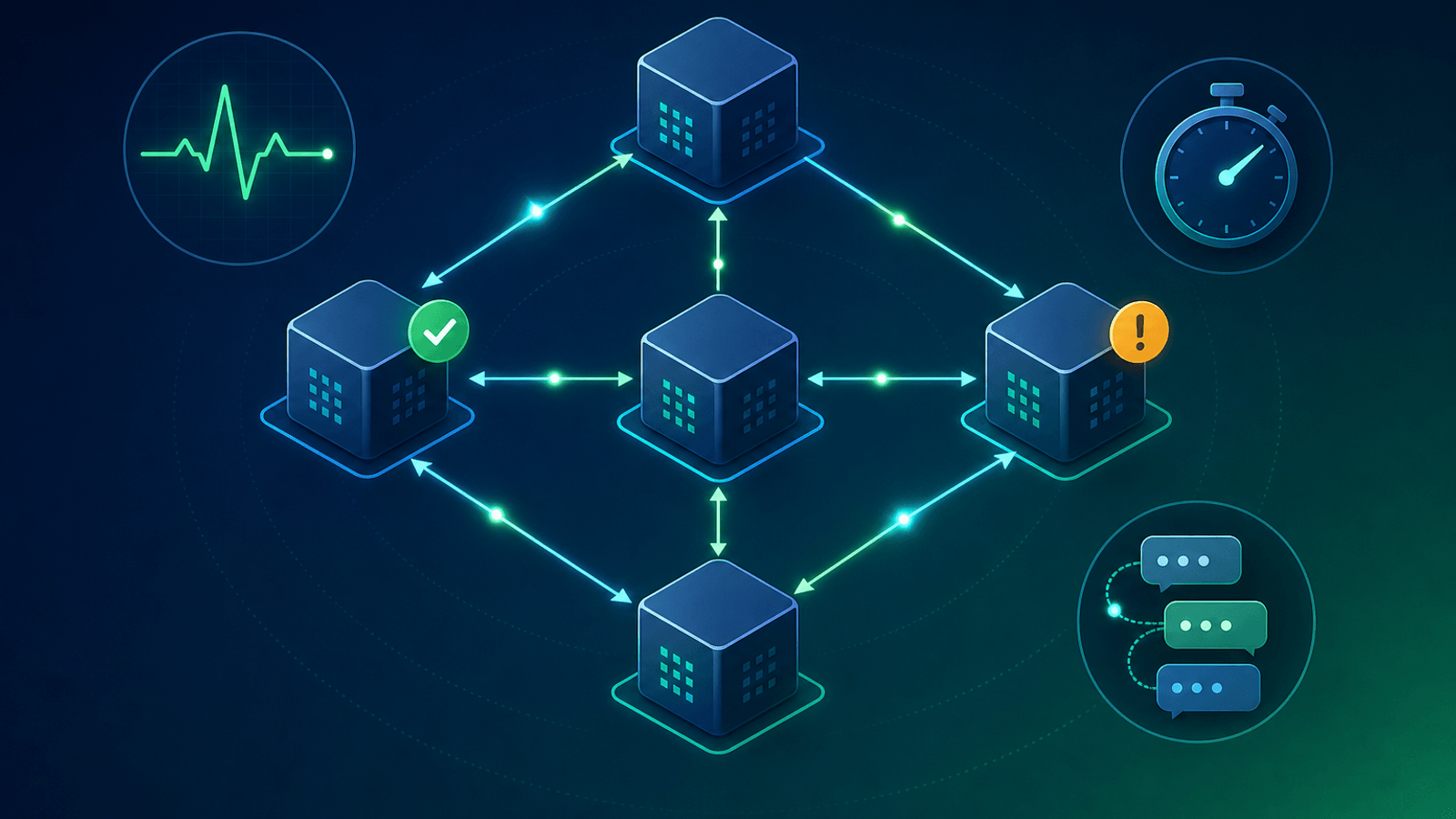

None of these failure modes show up in HTTP-style uptime monitoring. gRPC has its own protocol semantics, status codes, and failure patterns — and most monitoring tools were built for the REST world.

This guide covers what to monitor on a gRPC service, how to do it across the four call patterns (unary, server streaming, client streaming, bidirectional), and how to integrate gRPC monitoring with the rest of your microservice observability.

Why gRPC Needs Its Own Monitoring Approach

gRPC is not HTTP-with-a-different-syntax. It has its own protocol semantics that demand specific monitoring:

- HTTP/2 framing — Everything runs over HTTP/2, so connection failures look different

- Status codes are different — gRPC uses

OK,CANCELLED,DEADLINE_EXCEEDED,UNAVAILABLE,RESOURCE_EXHAUSTED, etc., not 200/500 - Long-lived connections — Unlike most HTTP, gRPC connections are pooled and reused; failures can be sticky

- Four call patterns — Unary, server streaming, client streaming, bidirectional streaming each fail differently

- Deadlines, not timeouts — gRPC propagates deadlines across hops; a misconfigured deadline cascades through your whole call graph

- Binary protocol — You can't just curl an endpoint; checks need a real gRPC client

- Service mesh interaction — Envoy, Istio, Linkerd, and others terminate, retry, and shape gRPC traffic

A standard HTTP/200 check on a gRPC port will tell you if the TCP socket accepts connections. It won't tell you whether your service is actually serving RPCs.

What to Monitor

1) gRPC Health Checking Protocol

gRPC defines a standard health checking protocol — a service called grpc.health.v1.Health with Check and Watch methods. This is the canonical way to monitor gRPC service health.

Check— A single unary RPC that returnsSERVING,NOT_SERVING, orUNKNOWNWatch— A server-streaming RPC that pushes status changes- Per-service status — A single server can host multiple services; the health protocol supports per-service health

Almost every gRPC framework (gRPC-Go, gRPC-Java, grpc-python, .NET, etc.) provides a built-in implementation. Use it. Custom HTTP /health endpoints next to your gRPC server are a code smell — you end up monitoring HTTP while users actually use gRPC.

2) Per-Method Status Code Distribution

gRPC has 17 standardized status codes. Each tells you something different:

| Code | Meaning | What it usually indicates |

|---|---|---|

OK |

Success | All good |

CANCELLED |

Client cancelled | Often deadline-related |

INVALID_ARGUMENT |

Bad request data | Input validation; often a client-side bug |

DEADLINE_EXCEEDED |

Took too long | Backend slow or deadline too tight |

NOT_FOUND |

Resource missing | Often expected, but spikes signal a problem |

ALREADY_EXISTS |

Conflict | Duplicate writes, race conditions |

PERMISSION_DENIED |

Auth issue | Often a client cred or role problem |

RESOURCE_EXHAUSTED |

Quota/limit hit | Rate limit, memory cap, payload too large |

FAILED_PRECONDITION |

State invalid | Client retry won't help |

ABORTED |

Concurrency conflict | Retry with backoff usually works |

UNIMPLEMENTED |

Method missing | Client/server version mismatch |

INTERNAL |

Server bug | The closest to a 500 |

UNAVAILABLE |

Service down | The closest to a 503; retryable |

DATA_LOSS |

Unrecoverable | Serious; investigate |

UNAUTHENTICATED |

Missing/bad creds | Auth path problem |

Track the distribution per method, not in aggregate. A surge in RESOURCE_EXHAUSTED on one method is a leading indicator for a backend running out of capacity — long before HTTP-style monitoring would notice.

3) Latency Distribution Per Method

gRPC services often have wildly different latency characteristics across methods. Aggregate latency hides the slow methods:

- p50, p95, p99 per method — The methods that show up at p99 are your candidates for optimization

- Compare against deadlines — If p95 latency is approaching your default deadline, you're about to start seeing

DEADLINE_EXCEEDED - Track over time — A method that gets slower over weeks is your earliest warning of N+1 queries, growing indices, or memory leaks

4) Connection-Level Health

gRPC connections are pooled and long-lived. Connection-level metrics matter:

- Active connection count per server

- Connection establishment rate — A spike here means clients are reconnecting frequently

- GOAWAY frame rate — Servers send GOAWAY before shutdown; bursts can indicate rolling deploys or pod churn

- Keepalive ping success — gRPC keepalives detect dead connections; failures here precede cascade failures

5) Deadline Propagation

gRPC deadlines propagate across calls — a client setting a 5-second deadline on Service A means Service A has at most 5 seconds to call Service B and return. Mismonitor this and you'll spend hours chasing red herrings:

- Track deadline budget consumed at each hop

- Alert on services that consume disproportionate deadline budget — Often the slowest, least-loved code path

- Watch for cascading

DEADLINE_EXCEEDED— A single slow downstream pulls your whole call graph into timeout territory

6) Streaming RPC Health

Streaming RPCs (server, client, and bidi) need different monitoring than unary:

- Stream open count — Active long-lived streams

- Messages per stream — A stream with zero messages but an open connection is broken

- Stream lifetime — Both anomalously short and anomalously long durations are suspicious

- Cancellation rate — Spikes mean clients are giving up

7) TLS / mTLS

gRPC almost always runs over TLS, often with mutual TLS in service meshes:

- Certificate expiry for both server and client certificates

- Cert rotation success — Service mesh CAs (SPIRE, cert-manager) rotate certs; rotation failures cause

UNAVAILABLEstorms - TLS handshake failure rate — A spike here is often the first signal of a cert or trust-store problem

8) Service Mesh Behavior

If you run gRPC behind Envoy/Istio/Linkerd:

- Retry budget consumption — Service mesh retries can mask real failures; track them separately

- Circuit breaker trips — Counts and durations

- Load balancer steady-state — gRPC's HTTP/2 means a single client connection sticks to one pod unless the LB does subset balancing

Common gRPC Failure Modes

| Failure | User Impact | How to Detect |

|---|---|---|

| Server up but health check returns NOT_SERVING | Clients route away from healthy-looking pod | Health protocol monitoring |

| Single method returning RESOURCE_EXHAUSTED | Specific feature broken for some clients | Per-method status code tracking |

| Streaming RPC stops emitting messages | "Live" features silently freeze | Per-stream message rate alerts |

| Sticky connection to dead pod | Some clients see UNAVAILABLE for minutes | Connection establishment / GOAWAY metrics |

| Cert rotation failed mid-day | mTLS handshake failures cascade | Cert expiry + handshake failure rate |

| Deadline propagation misconfigured | Cascading DEADLINE_EXCEEDED across services | Per-hop deadline budget tracking |

| Service mesh retry storm | Apparent recovery hides root cause | Retry budget tracking |

| Protobuf schema mismatch after deploy | UNIMPLEMENTED on a previously working method | Per-method success rate |

| Large message exceeds maxInboundMessageSize | RESOURCE_EXHAUSTED on specific clients | Per-method, per-client error rates |

| Compression negotiation broken | Bandwidth blow-up, latency increase | Bytes-on-wire per method |

Setting Up gRPC Monitoring

Quick start

- Health protocol checks — Run

grpc.health.v1.Health/Checkagainst each service from a synthetic monitor - TLS / cert expiry monitoring on your gRPC endpoints

- Per-service success rate dashboard from server-side metrics

- Latency p95 alerts per method

Comprehensive setup

Add:

- Per-method status code distribution with alerts on non-

OK, non-expected codes - Per-method latency p95 and p99 with alerts on regressions

- Streaming-specific metrics (open streams, messages-per-stream, stream lifetime)

- Connection-level metrics (open connections, GOAWAY rate, keepalive failures)

- Deadline budget tracking at each hop

- Service mesh metrics (retry rate, circuit breaker state, traffic split)

- Synthetic checks that exercise both unary and streaming patterns

- Multi-region health checks for any externally-exposed gRPC service

How gRPC Monitoring Connects to the Rest of Your Stack

gRPC monitoring isn't standalone. It needs to integrate with the broader observability story:

- APM/tracing — OpenTelemetry has first-class gRPC support; spans should include the gRPC method, status code, and deadline budget

- Metrics — Prometheus exposition for gRPC servers is standard; scrape per-method counters

- Logs — Structured logs with the gRPC method, status code, and request ID for cross-service correlation

- External monitoring — For externally-exposed gRPC APIs, run synthetic clients that look like real clients (see Microservices Monitoring: Health Checks Guide)

If you're running gRPC inside Kubernetes, the patterns in Kubernetes Monitoring: Health Checks and Pod Uptime and the load-shedding considerations in Microservices Monitoring apply directly. For comparison with REST, see REST API Monitoring: Endpoints, Errors, and Performance.

What to Do When gRPC Monitoring Fires

Health protocol returns NOT_SERVING:

- Check pod logs for startup or dependency failures

- Verify downstream dependencies are healthy

- Check whether the service is in graceful shutdown

- Look for recent deploys that might have broken initialization

Surge in UNAVAILABLE:

- Check pod restart rate / rolling deploy in progress

- Verify load balancer subset balancing is working

- Look at GOAWAY frame rate

- Check if a single client is hammering one pod (subset balancing problem)

Surge in DEADLINE_EXCEEDED:

- Check downstream service latency

- Look for slow database queries, lock contention

- Verify deadlines are sane (not too tight after a recent change)

- Check whether a single hot key is creating a cascading slowdown

Surge in RESOURCE_EXHAUSTED:

- Check for rate-limit hits — server-side or downstream

- Verify max message size limits for the method

- Look at memory pressure on the server

- Check for any quota changes recently applied

Streaming methods quiet:

- Verify keepalive pings are firing

- Check for client-side bugs in stream consumption

- Look at backend pub/sub or event source health

- Verify the stream isn't being held open without server-side activity

How Webalert Helps

Webalert provides external monitoring for the parts of your gRPC stack that are exposed to clients, plus the supporting infrastructure:

- TLS / SSL monitoring — catch certificate issues on gRPC endpoints before clients see handshake failures

- DNS monitoring — detect resolution failures that prevent clients from reaching your services

- HTTP and TCP checks — monitor the load balancer fronting your gRPC services

- Multi-region checks — confirm externally-exposed gRPC services are reachable globally

- Webhook + heartbeat monitoring — pair with your Prometheus alerts for in-cluster gRPC metrics

- Multi-channel alerts — Email, SMS, Slack, Discord, Microsoft Teams, webhooks

- Status pages — communicate service issues to API consumers transparently

- 5-minute setup — start monitoring the public face of your gRPC stack today

See features and pricing for details.

Summary

- gRPC fails differently than REST. Use the standard gRPC health checking protocol, not custom HTTP endpoints next to your gRPC server.

- Monitor per-method status code distribution, not just aggregate success rate. The 17 standardized codes each tell you something specific.

- Track latency at p95 and p99 per method, and watch the gap to your deadlines.

- Streaming RPCs need their own metrics: open stream count, messages per stream, stream lifetime, cancellation rate.

- Watch connection-level signals (active connections, GOAWAY frames, keepalive success) to catch sticky failures.

- Deadline propagation makes downstream issues cascade; track deadline budget at each hop.

- TLS / mTLS rotation is a leading cause of gRPC outages; monitor cert expiry and handshake failure rates.

gRPC gives you a structured, performant protocol. Monitoring proves it's actually delivering for clients.