Kubernetes is excellent at keeping your workloads running. When a pod crashes, it restarts it. When a node fails, it reschedules to another. When demand spikes, it scales out.

But Kubernetes doesn't tell you whether your users can actually reach your application.

A pod can be marked "Running" while returning 500s. A service can exist while routing to zero healthy pods. An ingress can be configured while SSL termination is broken. Kubernetes is watching the containers — but nobody is watching what users see.

This guide covers the full Kubernetes monitoring picture: what the cluster watches internally, what you need to watch externally, and how to build alerting that catches real failures before your users do.

What Kubernetes Monitors Internally

Before adding external monitoring, understand what Kubernetes already does for you.

Liveness probes

A liveness probe tells Kubernetes whether a container is alive. If the probe fails, Kubernetes kills the container and restarts it.

livenessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 10

periodSeconds: 10

failureThreshold: 3

If your app deadlocks or gets into an unrecoverable state, the liveness probe catches it and triggers a restart.

Readiness probes

A readiness probe tells Kubernetes whether a container is ready to accept traffic. If the probe fails, Kubernetes removes the pod from the service's endpoints — traffic stops routing to it.

readinessProbe:

httpGet:

path: /ready

port: 8080

initialDelaySeconds: 5

periodSeconds: 5

failureThreshold: 2

Readiness probes prevent traffic from reaching pods that are still warming up, running database migrations, or temporarily overloaded.

Startup probes

A startup probe gives slow-starting containers time to become ready before liveness probes kick in. Useful for apps with long initialization times.

startupProbe:

httpGet:

path: /healthz

port: 8080

failureThreshold: 30

periodSeconds: 10

What internal probes can't tell you

Kubernetes probes check individual containers from inside the cluster. They don't check:

- Whether external users can reach your service through the ingress

- Whether SSL/TLS termination is working at the load balancer

- Whether DNS resolves your domain to the correct IP

- Whether the service is accessible from all geographic regions

- Whether response content is correct (not just status code 200)

- Whether response times are within acceptable limits for users

For all of that, you need external monitoring.

The Kubernetes Monitoring Stack

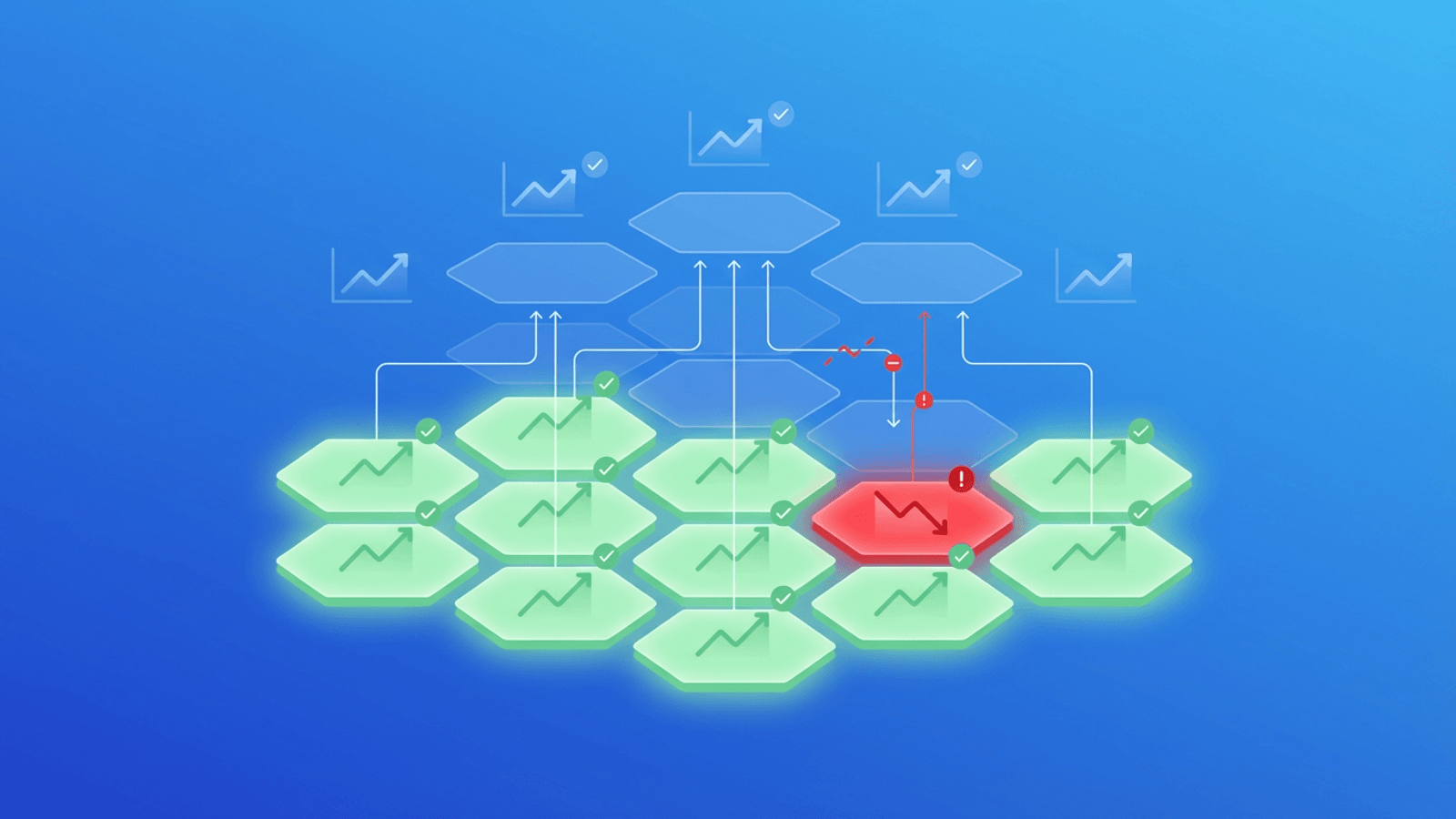

A complete monitoring strategy for Kubernetes has three layers:

Layer 3: External monitoring (user experience)

↕ HTTP checks, SSL, DNS, response time from outside the cluster

Layer 2: Internal observability (cluster internals)

↕ Prometheus, Grafana, resource metrics, pod status

Layer 1: Kubernetes internal probes (container health)

↕ Liveness, readiness, startup probes

Most teams set up Layer 1 (probes) automatically. Many add Layer 2 (Prometheus + Grafana) eventually. But Layer 3 — the external view that mirrors what users experience — is often the last to be added, even though it's the most important for catching real outages.

This guide focuses on Layer 3, which complements your existing K8s health check and observability tooling.

What to Monitor Externally

1. Your public-facing ingress endpoints

The ingress controller is where external traffic enters your cluster. A misconfigured ingress rule, a broken TLS secret, or a failed ingress controller deployment can make your application completely unreachable — while all pods inside the cluster appear healthy.

What to monitor:

- Primary domain (

https://yourapp.com) - API subdomain (

https://api.yourapp.com/health) - Any public-facing service endpoints

- Canary or staging endpoints (if publicly accessible)

Check every 1 minute. Kubernetes deployments and rollouts happen frequently — you want immediate detection if a rollout breaks the ingress.

2. Service health endpoints

Well-designed Kubernetes applications expose dedicated health endpoints:

/healthzor/health— Basic liveness check (is the process running?)/readyzor/ready— Readiness check (is the process ready for traffic?)/metrics— Prometheus-format metrics (scraped by Prometheus, not for external checks)

Monitor the /healthz and /readyz endpoints from outside the cluster. A 200 response from /readyz through the ingress means traffic is routing correctly through the ingress → service → pod chain.

Content validation tip: Don't just check for status 200. Add a content check that verifies the response body contains "status":"ok" or equivalent. This catches cases where your app returns 200 with an error body (a surprisingly common failure mode).

3. SSL/TLS certificates

Kubernetes manages TLS certificates in several ways:

- cert-manager — Automatically provisions and renews Let's Encrypt certificates, storing them as Kubernetes secrets

- Cloud-managed certs — GKE Managed Certificates, AWS ACM via ALB Ingress Controller

- Manual secrets — Self-managed certificates stored as TLS secrets

All of these can fail silently. cert-manager renewal can fail if DNS validation records were removed. Cloud-managed cert renewal can fail after cluster upgrades. Manually managed certs simply expire.

Monitor certificate expiry with alerts at 30, 14, and 7 days. Also monitor certificate validity (correct domain, valid chain) — catch mismatches immediately.

4. DNS resolution

Your cluster might be perfectly healthy while DNS resolution is broken. This happens when:

- External DNS controller (which syncs K8s services to your DNS provider) has an error

- A Helm release or Terraform apply updated DNS records incorrectly

- Your DNS provider has an outage

Monitor DNS resolution for all critical domains. Verify they resolve to the expected load balancer IP or hostname.

5. Response time

Kubernetes has many failure modes that don't cause outages but severely degrade performance:

- Resource starvation — Pods running near CPU/memory limits slow down

- Pod eviction — During node pressure, low-priority pods are evicted and restarting

- Horizontal Pod Autoscaler lag — Traffic spike before HPA has scaled out

- Cold starts — New pods handling traffic before warm-up is complete

- Noisy neighbor — Another workload on the same node consuming resources

Monitor response time trends. Alert when sustained response time exceeds 2-3x your baseline.

6. Background job heartbeats

Kubernetes CronJobs run scheduled tasks inside the cluster. If a CronJob fails — because the image can't be pulled, the pod can't be scheduled, or the job itself errors — it fails silently from the outside.

Use heartbeat monitoring: configure your CronJob to ping a URL when it completes successfully. If the heartbeat is missed within the expected window, you get an alert.

# Example: CronJob that pings Webalert heartbeat on completion

apiVersion: batch/v1

kind: CronJob

metadata:

name: data-processor

spec:

schedule: "0 * * * *"

jobTemplate:

spec:

template:

spec:

containers:

- name: processor

image: your-processor:latest

command:

- /bin/sh

- -c

- |

python process.py && \

curl -s https://app.web-alert.io/api/heartbeat/YOUR_HEARTBEAT_ID

Common Kubernetes Failure Modes and How to Catch Them

CrashLoopBackOff

What happens: A container crashes repeatedly. Kubernetes keeps restarting it with increasing delays (1s, 2s, 4s, 8s...). Eventually the pod is stuck in CrashLoopBackOff.

External symptom: The service returns 503 or times out because all pods are unavailable.

Detection: External HTTP monitor returns 503 or times out. Alert fires within 1-2 minutes of the crash loop starting.

ImagePullBackOff

What happens: A pod can't pull its container image. This happens when a new deployment references a non-existent image tag, a registry is unreachable, or pull secrets are missing.

External symptom: The new pods never become ready. The deployment stalls. If the rollout replaced all old pods first, the service is unavailable.

Detection: External HTTP monitor detects the service going down during a deployment. Response time spike before the outage can provide early warning.

OOMKilled

What happens: A container exceeds its memory limit and is killed by the OS. Kubernetes restarts it. If the memory issue persists, the cycle continues.

External symptom: Intermittent 503s or connection resets as pods restart.

Detection: External HTTP monitor detects intermittent failures. Alert fires if consecutive checks fail.

Node Not Ready

What happens: A worker node becomes unavailable. Pods on that node are rescheduled to other nodes after a timeout (default 5 minutes).

External symptom: During rescheduling, if there aren't enough resources on other nodes or if the rescheduled pods take time to start, the service is degraded or unavailable.

Detection: External HTTP monitor detects increased error rates or timeouts during the node failure window.

Ingress Controller Failure

What happens: The ingress controller pod crashes or is misconfigured. All traffic to all services behind the ingress stops routing.

External symptom: All services return 502/504 or are completely unreachable.

Detection: External HTTP monitor detects all monitored endpoints failing simultaneously. The "all at once" pattern suggests infrastructure-level failure rather than application-level.

Failed Rollout

What happens: A new deployment has a bug. The new pods start but return errors. Kubernetes may or may not roll back depending on your deployment configuration.

External symptom: Error rate increases as traffic routes to unhealthy new pods while old pods are terminated.

Detection: External HTTP monitor detects rising error rates immediately after a deployment. Alerts fire within the first minutes, giving the team time to roll back before all old pods are replaced.

Setting Up Health Check Endpoints

If your application doesn't have dedicated health endpoints yet, add them. They're the foundation of both Kubernetes probes and external monitoring.

Minimal health endpoint

# FastAPI example

@app.get("/healthz")

async def health():

return {"status": "ok"}

// Express example

app.get('/healthz', (req, res) => {

res.json({ status: 'ok' });

});

// Go example

http.HandleFunc("/healthz", func(w http.ResponseWriter, r *http.Request) {

w.Header().Set("Content-Type", "application/json")

w.Write([]byte(`{"status":"ok"}`))

})

Rich readiness endpoint

A readiness endpoint should verify that all dependencies (database, cache, external services) are reachable:

@app.get("/readyz")

async def readiness():

checks = {}

# Check database

try:

await db.execute("SELECT 1")

checks["database"] = "ok"

except Exception as e:

checks["database"] = f"error: {str(e)}"

# Check cache

try:

await redis.ping()

checks["cache"] = "ok"

except Exception as e:

checks["cache"] = f"error: {str(e)}"

all_ok = all(v == "ok" for v in checks.values())

status_code = 200 if all_ok else 503

return JSONResponse(

content={"status": "ok" if all_ok else "degraded", "checks": checks},

status_code=status_code

)

This gives both Kubernetes readiness probes and external monitoring a meaningful signal — not just "the process is alive" but "all dependencies are healthy."

Alerting for Kubernetes

What to alert on

| Condition | Severity | Channel |

|---|---|---|

| Service endpoint returns 5xx | Critical | SMS + Slack/Discord |

| Service endpoint unreachable (timeout) | Critical | SMS + Slack/Discord |

| SSL certificate expires in 7 days | High | Slack/Discord + email |

| Response time >3x baseline sustained | High | Slack/Discord |

| CronJob heartbeat missed | High | Slack/Discord |

| SSL certificate expires in 30 days | Medium | |

| DNS resolution changed unexpectedly | Medium | Slack/Discord |

Alert on deployments

The riskiest moment for any Kubernetes service is during a deployment. Consider temporarily increasing monitoring sensitivity around deployments:

- Check every 30 seconds during active rollouts (if your monitoring tool supports it)

- Alert after 1 consecutive failure (instead of the usual 2-3) during the deployment window

- Enable all notification channels during planned releases

Recovery notifications

Always configure recovery notifications. Kubernetes often resolves issues automatically (pod restart, rescheduling, HPA scale-out). A recovery notification confirms the incident is over without requiring the team to manually verify.

Multi-Region Monitoring for Kubernetes

If your cluster serves users globally, monitor from multiple regions.

A Kubernetes node failure in one availability zone might only affect users routed to that zone. Multi-region monitoring catches failures that are geographically isolated:

- Regional load balancer routing issue

- Availability zone with degraded node pool

- CDN routing users in a specific geography to a broken origin

Check from at minimum 3 geographic regions: US, Europe, and Asia-Pacific. This also helps you catch DNS propagation issues after cluster migrations or IP changes.

How Webalert Monitors Kubernetes

Webalert provides the external monitoring layer that complements your cluster's internal health checks:

- HTTP/HTTPS monitoring — Check ingress endpoints every 1 minute with content validation

- SSL monitoring — Certificate expiry and validity for cert-manager and cloud-managed certs

- DNS monitoring — Verify external DNS controller is keeping records in sync

- TCP port monitoring — Check any publicly exposed service port

- Response time tracking — Detect degradation from resource starvation, HPA lag, or noisy neighbors

- Heartbeat monitoring — Verify Kubernetes CronJobs complete successfully on schedule

- Multi-region checks — Catch availability zone and regional failures

- Deployment-aware alerting — Tight thresholds during rollouts, on-call escalation when things go wrong

- Status pages — Keep users informed during cluster incidents

Kubernetes watches your containers. Webalert watches what your users see.

See features and pricing for the full details.

Summary

- Kubernetes probes (liveness, readiness, startup) monitor containers from inside the cluster. They're essential but only see part of the picture.

- External monitoring catches what probes miss: ingress failures, SSL issues, DNS problems, geographic outages, and content errors.

- Monitor the full chain: domain → DNS → SSL → ingress → service → pod → response body.

- Key external checks: HTTP on ingress endpoints, SSL certificate validity and expiry, DNS resolution, response time, CronJob heartbeats.

- Common K8s failures detected by external monitoring: CrashLoopBackOff (service goes down), ImagePullBackOff (deployment breaks service), OOMKilled (intermittent errors), node failures (temporary degradation), failed rollouts (error spike during deployment).

- Alert with context: critical for outages, high for degradation, medium for upcoming expiries. Always send recovery notifications.

Your cluster is self-healing. Your monitoring should be self-aware.