Your uptime monitor says everything is green. Your status page shows zero incidents. Your error budget is healthy.

Then a customer pings: "your API has been failing for the last twenty minutes."

You check the logs. The errors are 429s, not 500s. Your service didn't crash. The third-party API you depend on started rate-limiting you because a scheduled job kicked off at the same time as a traffic spike, and you've been quietly cut off from your payment gateway, your email provider, or your AI vendor for the last twenty-three minutes. Nothing is technically down. Everything is technically broken.

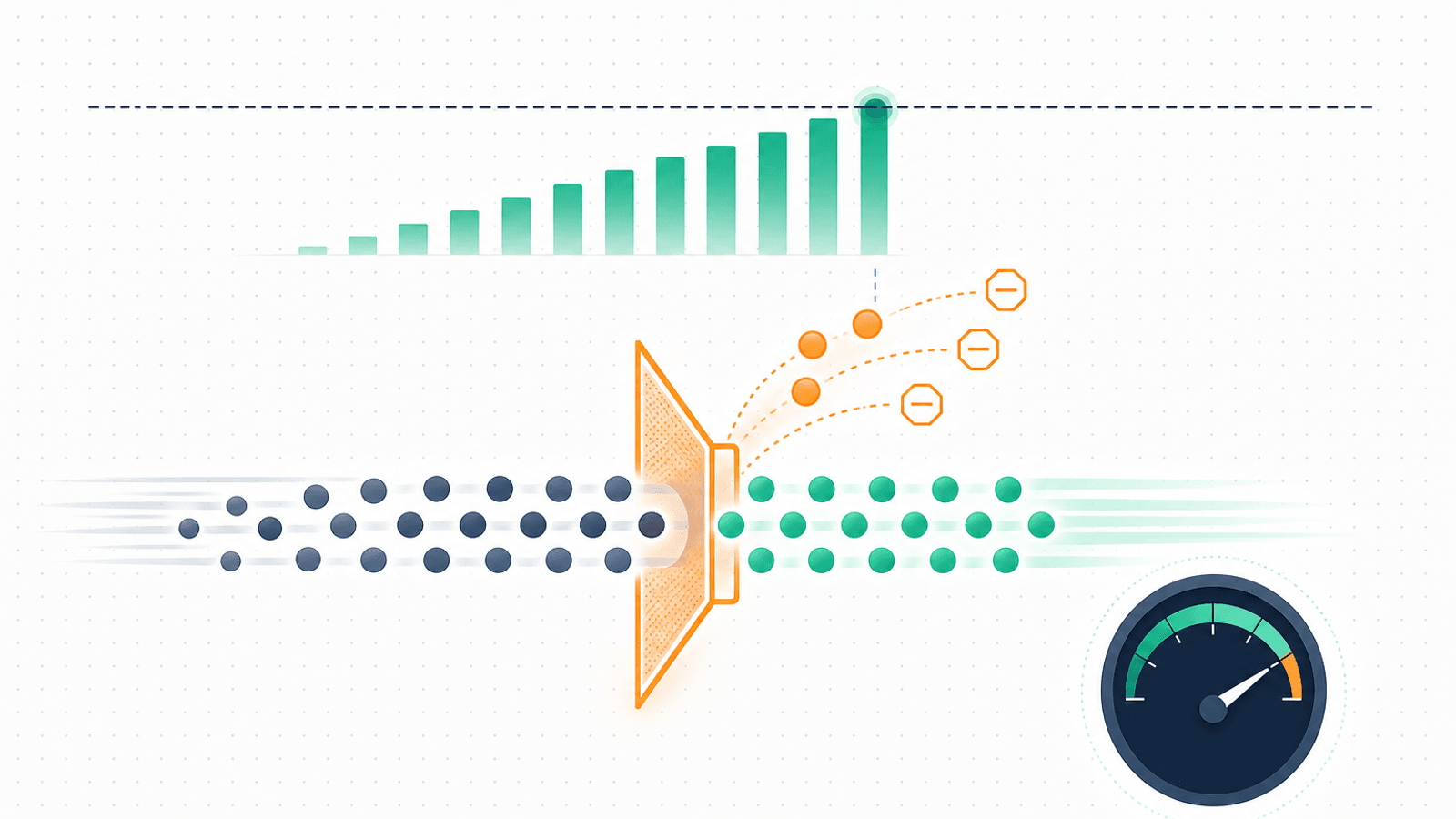

Rate limits are the invisible outage. You're not down — you're just being told "no" by a service you depend on. Your in-app health checks pass. Your status pages stay green. Your customers experience an outage anyway.

This guide covers how rate limits actually work, the three failure modes they cause, what to monitor, how to read the headers that tell you you're about to be cut off, and the alerting and backoff patterns that turn rate limits from a silent killer into a managed risk.

Why Rate Limits Are a Hidden Uptime Problem

Most monitoring is built around two states: working (2xx) and broken (5xx). Rate limits introduce a third state: throttled — your request is technically valid, technically reachable, technically authenticated, and yet refused.

Three things make this category of failure especially dangerous:

- They don't look like outages. Logs show 429s, not 500s. Health checks pass. Your provider's status page stays green because they are fine — you just hit a cap.

- They cascade silently. A few 429s in a non-critical path are easy to ignore. The pattern that they're a leading indicator of a larger problem (a runaway job, a viral traffic spike, a misconfigured client) gets missed until something user-facing breaks.

- They invert your assumptions about traffic patterns. Your fastest growth periods — viral launches, Black Friday, a press hit — are exactly when you'll hit rate limits. Your monitoring needs to scale with traffic, but rate limits are a ceiling that doesn't.

The strategic point: when you depend on third-party APIs, you've outsourced a portion of your uptime to those vendors' rate-limit decisions. Your monitoring needs to surface that risk before it becomes an incident.

The Three Rate-Limit Failure Modes

Rate limits cause incidents in three distinct shapes, each requiring different monitoring.

1) You are limited by an upstream API

This is the most common scenario. Your service calls Stripe / OpenAI / Twilio / GitHub / SendGrid and gets back 429s.

- Detection: 429 rate on your outbound calls climbs above baseline

- Impact: features that depend on that vendor degrade or fail

- Root cause: traffic spike on your side, a scheduled job firing, a bug that loops on retries, or your vendor lowering your limit

- Mitigation speed: depends on the vendor — sometimes a contact away, sometimes wait until the window resets

2) Your service rate-limits incoming requests

You operate a rate limiter for protection. Eventually it fires when it shouldn't.

- Detection: 429 rate on your inbound traffic climbs unexpectedly

- Impact: legitimate customers get throttled and complain

- Root cause: a customer with a real use case for higher throughput, a misconfigured limit, an attack that triggered the limiter, or shared IP collisions

- Mitigation speed: usually a configuration change

3) Customers of your API hit limits

This is the variant of #2 from the customer's perspective and the angle that drives churn:

- Detection: support tickets, customer-side error rate, your own dashboards showing high 429 rate to specific accounts

- Impact: customer experience and trust

- Root cause: limits set too low for real workloads, or customers building integrations that don't respect the limits

- Mitigation speed: depends on whether you adjust limits or push them to fix their client

Treat each of these as a distinct monitoring problem with its own dashboards, thresholds, and on-call playbooks.

How Rate Limits Actually Work

Monitoring is more effective when you understand the underlying mechanism. The three common algorithms:

Token bucket

A "bucket" holds N tokens. Each request consumes a token. Tokens refill at a fixed rate. If the bucket is empty, requests are throttled.

- Allows short bursts (use the whole bucket at once)

- Average throughput is bounded by the refill rate

- Used by Stripe, AWS, and many cloud APIs

Fixed window

X requests allowed per Y seconds, counted from the start of each window.

- Simple but allows a 2X burst at the window boundary (X at the end of one window, X immediately at the start of the next)

- Used by GitHub (primary limit) and many basic limiters

Sliding window

Like fixed window, but the count is over a rolling time window rather than a fixed boundary.

- Smoother behavior, harder for clients to "game" the boundary

- Used by Cloudflare and many CDN-layer limiters

You usually can't tell which algorithm a vendor uses from the outside, but the behavior of the retry-after and x-ratelimit-* headers gives clues. Token-bucket APIs typically refill steadily; fixed-window APIs reset abruptly at clock boundaries.

Per-endpoint vs global limits

Most large APIs have layered limits:

- Global per-account (e.g., 100,000 requests/hour total)

- Per-endpoint (e.g., 500 requests/minute on

/messageseven if your global limit is 100,000/hour) - Per-resource (e.g., 1 write/sec to a single Firestore document)

- Burst limits separate from sustained limits

You can be well under your global limit and still be rate-limited on one specific endpoint. Monitor per-endpoint rates separately.

Reading the Headers That Tell You You're About to Be Cut Off

The single highest-leverage rate-limit monitoring practice: don't wait for 429s. Read the headers on successful responses.

Most well-behaved APIs return x-ratelimit-* headers on every response (200s included). They tell you exactly how much headroom you have left.

Common header conventions

| Header | What it means |

|---|---|

x-ratelimit-limit |

Total requests allowed in the window |

x-ratelimit-remaining |

Requests left before throttling |

x-ratelimit-reset |

When the window resets (unix timestamp or seconds) |

retry-after |

(On 429s) How long to wait before retrying |

Per-provider naming

- GitHub:

x-ratelimit-limit,x-ratelimit-remaining,x-ratelimit-reset, plusx-ratelimit-resourceto identify which bucket - Stripe:

stripe-should-retry,idempotency-keyrelated; primarily uses 429 withretry-after - OpenAI / Anthropic: token-based;

x-ratelimit-remaining-requestsandx-ratelimit-remaining-tokens - Twilio: 429 with

retry-after, no headroom header - Shopify:

x-shopify-api-call-limitin the format<current>/<max> - AWS: no standard header; relies on retryable error responses

The pattern: log the rate-limit headers from every API call, aggregate them in your metrics pipeline, and watch the trend.

Monitor Headroom, Not Just Errors

If you only monitor 429s, you're alerting after the failure. By the time the error rate climbs, your service is already degraded.

Monitor remaining quota instead:

- Alert at 20% remaining — investigate; something is consuming faster than expected

- Alert at 10% remaining — likely to be throttled in the current window

- Track the rate of remaining decrement — a quota that drops 5%/minute will hit zero in 4 minutes regardless of where it is now

Plot remaining quota over time. The shape of the curve tells you whether you're approaching the limit linearly (predictable) or in a runaway pattern (a bug or a spike).

For APIs that don't expose remaining headers, fall back to local instrumentation:

- Count outbound requests per endpoint per minute

- Compare against your known limit

- Alert when the rolling rate approaches the cap

Per-Provider Specifics

Stripe

- Live mode: 100 read req/sec, 100 write req/sec per account

- Test mode: 25 req/sec for both

- Returns 429 with

stripe-should-retryand the standardretry-after - Failure mode to watch: bulk migration scripts running against live mode hit the cap quickly

- Mitigation: idempotency keys + exponential backoff; or contact Stripe for higher limits

GitHub

- Primary limit: 5,000 req/hour for authenticated REST, 60/hour for unauthenticated

- Secondary limits: short bursts, content creation, concurrent requests — these are stricter and often surprise teams

- Headers:

x-ratelimit-resourcetells you which bucket - Failure mode to watch: pagination through many issues / PRs eats quota fast

- Mitigation: GraphQL API for queries (different limit), conditional requests with ETags

Twilio

- Per-account concurrency limits rather than rate-per-second on most APIs

- SMS throttling by carrier (separate from API rate limits)

- Failure mode to watch: marketing campaigns that send to thousands at once

- Mitigation: queue + worker pattern; request a concurrency increase ahead of campaigns

OpenAI / Anthropic / LLM Providers

- Token-based limits (TPM = tokens per minute) and request-based limits (RPM = requests per minute)

- Tier-based — your limit climbs with usage history

- Returns 429 for limit errors and 529 for Anthropic-specific "overloaded" responses

- Failure mode to watch: long prompts eat TPM even at low request volume

- Mitigation: see AI API Monitoring: OpenAI, Anthropic, and Gemini Uptime for the full pattern

AWS

- API throttling is the norm across all AWS APIs (DynamoDB, S3, etc.)

- Returns various codes:

ThrottlingException,ProvisionedThroughputExceededException, etc. - Failure mode to watch: cold-start backfill jobs that scan large tables

- Mitigation: SDK clients have built-in backoff, but tune it; consider rate-limiting at the application layer

Shopify, SendGrid, Mailgun, others

Each has its own conventions; the pattern is the same: log the headers, monitor remaining, alert before failure.

429 vs 503 vs 529 vs Other Throttling Codes

Different platforms use different codes for "we won't serve you right now":

- 429 Too Many Requests — the standard rate-limit code

- 503 Service Unavailable — sometimes used when a service is intentionally refusing traffic (and is sometimes confused with capacity issues)

- 529 Overloaded — non-standard; used by Anthropic and Twitch to mean "model/service overloaded" (a soft rate limit)

- 509 Bandwidth Limit Exceeded — non-standard but seen on some hosting platforms

- AWS-specific exception names —

ThrottlingException,TooManyRequestsException,ProvisionedThroughputExceededException

Distinguish them in your monitoring. A 429 means "back off and try again later." A 503 from a normally-healthy service might mean "everyone is being told no" rather than just you. A 529 from Anthropic means "the model is overloaded right now" — retry with backoff, not a different action.

See HTTP Status Codes Explained: A Monitoring Guide for the full status code map.

Client-Side Backoff Strategy

Once you're being rate-limited, how you retry determines whether you recover or cascade.

The wrong way

- Retry immediately on 429 (often)

- Retry in a tight loop

- All clients retry in lockstep when the window resets

The last one — the thundering herd — is the most common cascade. The window resets, every backed-up client retries at exactly the same second, the limit is immediately hit again, everyone gets 429 again.

The right way

- Respect the

retry-afterheader when it's present - Exponential backoff when it's not (wait 1s, 2s, 4s, 8s, 16s, ...)

- Add jitter — randomize the wait by ±25% so clients don't synchronize

- Cap the maximum backoff — usually 60 seconds is reasonable; longer waits should escalate to alerts rather than indefinite retries

- Use circuit breakers — after N consecutive 429s, stop calling the API for a cooling period rather than keeping the pressure on

- Distinguish retryable vs non-retryable — a 429 with

retry-afteris retryable; a 401 (auth failed) is not

A practical pattern: queue rate-limited requests rather than retrying immediately. The queue smooths bursts; the API never sees the spike that would have hit the limit.

Building Rate-Limit Budget Into Capacity Planning

Most teams plan capacity around their own infrastructure: CPU, memory, database. They rarely plan capacity around their third-party rate limits.

A simple practice: for each critical third-party API, document:

- The current rate limit (per second, per minute, per hour)

- The peak observed usage in the last 30 days

- The expected usage at 2× current traffic

- The headroom (limit minus expected usage)

- The contact path for increasing the limit

Review this document before launches, marketing events, and quarterly. If the headroom is less than 50% for a critical dependency, increase the limit before you need it. Most vendors can raise limits within hours if you ask; almost none can do it in the middle of an incident.

For more on the broader strategy, see Third-Party Dependency Monitoring: What You Don't Control.

Alert Thresholds That Work

Rate-limit alerts are noisy if you treat every 429 as an incident. Tune for signal:

For outbound APIs (you depend on a vendor)

- Any 429 in 1 hour for a non-critical API: log only

- 5+ 429s in 5 minutes: notification to channel

- Sustained 429 rate > 1% for 5 minutes: page on-call

- Sustained 429 rate > 5%: page on-call as emergency

- Headroom < 20% on critical API: notification (preventative)

- Headroom < 10% on critical API: page (about to fail)

For inbound APIs (your customers hitting your limits)

- Total 429 rate > 0.5% sustained: notification (might be a customer with a real use case)

- Single customer 429 rate > 5% of their requests: notification (specifically this customer is being throttled — opens a sales/support conversation)

- Total 429 rate > 5%: page (your limits are probably miscalibrated or you're under attack)

See Alert Fatigue: Notifications That Get Acted On for the broader principles.

Rate Limit Incidents You Can Plan For

Some rate-limit incidents are predictable. Plan for them:

- Deploys — a deploy can replay a queue of requests, spiking outbound API calls. Pre-deploy: confirm headroom. Post-deploy: monitor 429 rate tightly for 15 minutes.

- Promotional emails / campaigns — a 100K-user email that triggers a webhook can fire 100K API calls in minutes. Pre-warm: notify vendors, increase limits temporarily, throttle on your side.

- Scheduled jobs — nightly batch jobs that hit external APIs can run into rate limits if data has grown. Track job duration trends; alert when a job's API call count grows > 20% week-over-week.

- Launch events — viral launches can 10× outbound calls in minutes. Pre-launch: request quota increases from every critical vendor; have a "throttle mode" ready that defers non-essential calls.

- Failed integrations — a customer integration that loops on errors can consume your quota. Per-customer rate limits prevent one customer from starving the others.

Setting Up a Rate-Limit Uptime Check

You can't directly monitor "are we close to a rate limit?" with an external uptime tool, but you can monitor "is the API returning rate-limit errors right now?" Build an internal endpoint that:

- Performs a single light call to each critical third-party API

- Returns the current

x-ratelimit-remainingandx-ratelimit-limitheaders it received - Returns a

healthyboolean based on whether headroom is acceptable

Then point your external uptime monitor at that endpoint:

GET https://yourapp.com/internal/rate-limit-canary

Authorization: Bearer <monitoring-key>

Returning a response like:

{

"stripe": { "remaining_pct": 87, "healthy": true },

"openai": { "remaining_pct": 42, "healthy": true },

"twilio": { "remaining_pct": 8, "healthy": false }

}

Your uptime monitor can then alert on the canary endpoint failing or returning unhealthy. For the underlying patterns, see REST API Monitoring: Endpoints, Errors, and Performance and Webhook Monitoring: Ensure Your Integrations Never Fail Silently.

Rate-Limit Monitoring Checklist

For every critical third-party API integration:

- Rate-limit headers logged from every response

-

x-ratelimit-remaining(or equivalent) tracked as a metric over time - Headroom alerts at 20% and 10%

- 429 rate tracked per endpoint, separately from 5xx

-

retry-afterheader always respected by client code - Exponential backoff with jitter on retries

- Circuit breaker after N consecutive 429s

- Per-customer rate-limit tracking (for your own API)

- Capacity-planning doc with headroom for each critical vendor

- Vendor contact paths for limit increases documented

- Pre-deploy and pre-event headroom checks in your launch checklist

- Synthetic rate-limit canary endpoint with external monitoring

How Webalert Helps Monitor Rate Limits

Webalert is built for monitoring exactly the kind of API endpoints that rate-limit failures happen on:

- HTTP monitoring with custom headers — Set the auth headers needed to hit any third-party API on a schedule

- Status code alerting — Treat 429s as a distinct alert class from 5xx, with their own thresholds

- Response time alerts — Sustained latency increases often precede rate-limit cliffs

- Content validation — Verify a canary endpoint's response body matches expected shape (e.g., the "healthy" field above)

- Multi-region checks — Confirm rate-limit behavior across the regions your traffic comes from

- 1-minute check intervals — Detect throttling within a minute, not five

- Multi-channel alerts — Email, SMS, Slack, Discord, Microsoft Teams, webhooks

- Status page — Communicate degraded experiences to your customers when an upstream vendor throttles you

- 5-minute setup — Add the endpoint, set thresholds, and you're live

See features and pricing for details.

Summary

- Rate limits are the invisible outage: your service is up but effectively cut off from the third parties it depends on.

- Three failure modes worth monitoring distinctly: limited by upstream, limiting incoming traffic, and customers hitting your API's limits.

- Don't wait for 429s — track remaining headroom from

x-ratelimit-remainingheaders and alert before the cliff. - Each provider names headers and behaves differently; Stripe, GitHub, OpenAI, Twilio, AWS all have their own conventions worth knowing.

- Client-side backoff with jitter, circuit breakers, and respect for

retry-afterprevents thundering-herd cascades when limits hit. - Plan rate-limit capacity the same way you plan compute capacity — peak usage, headroom, and a path to increase before you need to.

- Alert thresholds tuned for signal: a few 429s aren't an incident; sustained rates or low headroom are.

The companies that don't get surprised by rate limits aren't the ones who never hit them. They're the ones who see them coming.