Your SaaS platform shows 99.9% uptime. But one enterprise customer experienced three hours of degraded service last month because their tenant database hit a connection limit while the rest of the platform ran fine.

Global uptime numbers hide tenant-specific failures. And in multi-tenant architectures, those hidden failures are the ones that churn your highest-value customers.

This guide explains how to monitor multi-tenant SaaS platforms so you detect per-customer issues, isolate noisy neighbors, and deliver the uptime each tier expects.

Why Global Monitoring Is Not Enough for Multi-Tenant

Traditional monitoring answers: "Is the service up?"

Multi-tenant monitoring needs to answer: "Is the service working correctly for each customer segment?"

The difference matters because multi-tenant platforms share resources:

- Shared databases — One tenant's heavy query can degrade response times for others

- Shared compute — CPU or memory exhaustion by one tenant affects co-located tenants

- Shared queues — A burst of events from one customer can delay processing for everyone

- Shared network — Bandwidth saturation or connection pool exhaustion impacts all tenants

- Shared caches — One tenant's cache eviction pattern can thrash the cache for others

A global health check returns 200 OK while specific tenants experience timeouts, stale data, or failed operations.

Failure Modes Unique to Multi-Tenant Architectures

| Failure Mode | What Happens | Who Is Affected |

|---|---|---|

| Noisy neighbor (CPU/memory) | One tenant's workload consumes shared resources | Co-located tenants |

| Database connection exhaustion | Connection pool saturated by high-usage tenant | All tenants on same DB |

| Queue backlog | Burst of events from one tenant delays processing | All tenants sharing the queue |

| Cache thrashing | One tenant's access pattern evicts other tenants' cached data | Tenants with lower request volume |

| Migration/schema drift | Tenant-specific data migration fails or runs long | Individual tenant |

| Rate limit misconfiguration | Limits too generous for one tenant, too strict for another | Affected tenants |

| Feature flag per tenant | New feature enabled for specific tenant causes errors | Targeted tenants only |

| Regional routing | Tenant routed to degraded region or pod | Tenants in that region |

These failures share a common trait: global checks miss them.

What to Monitor in a Multi-Tenant Platform

1) Tenant-Aware Health Endpoints

Go beyond /health. Create endpoints that validate per-tenant functionality:

GET /health/tenant/{tenant_id}

This endpoint should verify:

- Database connectivity for the tenant's data store

- Cache availability for the tenant's namespace

- Queue processing status for the tenant's events

- Feature flag state for the tenant

Even a simplified version that checks connectivity to the tenant's database shard catches most tenant-specific outages.

2) Per-Tier Synthetic Checks

Group tenants by tier (free, pro, enterprise) and run synthetic checks that represent each tier's experience:

- Free tier — Basic read operations, rate-limited paths

- Pro tier — Full CRUD operations, API access, integrations

- Enterprise tier — SSO login, dedicated resources, SLA-critical paths

Monitor each tier separately. An issue affecting only free-tier users still matters for conversion, and an enterprise-tier degradation directly risks revenue.

3) Isolation Boundary Monitoring

Monitor the boundaries where tenant isolation can fail:

- Database connections — Track pool utilization per tenant or shard

- Memory and CPU — Monitor resource consumption per tenant namespace

- Queue depth — Track per-tenant queue length and processing latency

- Rate limits — Monitor rate limit hits per tenant to detect misconfigurations

- Storage — Track per-tenant storage consumption against quotas

When isolation boundaries are stressed, alert before they break.

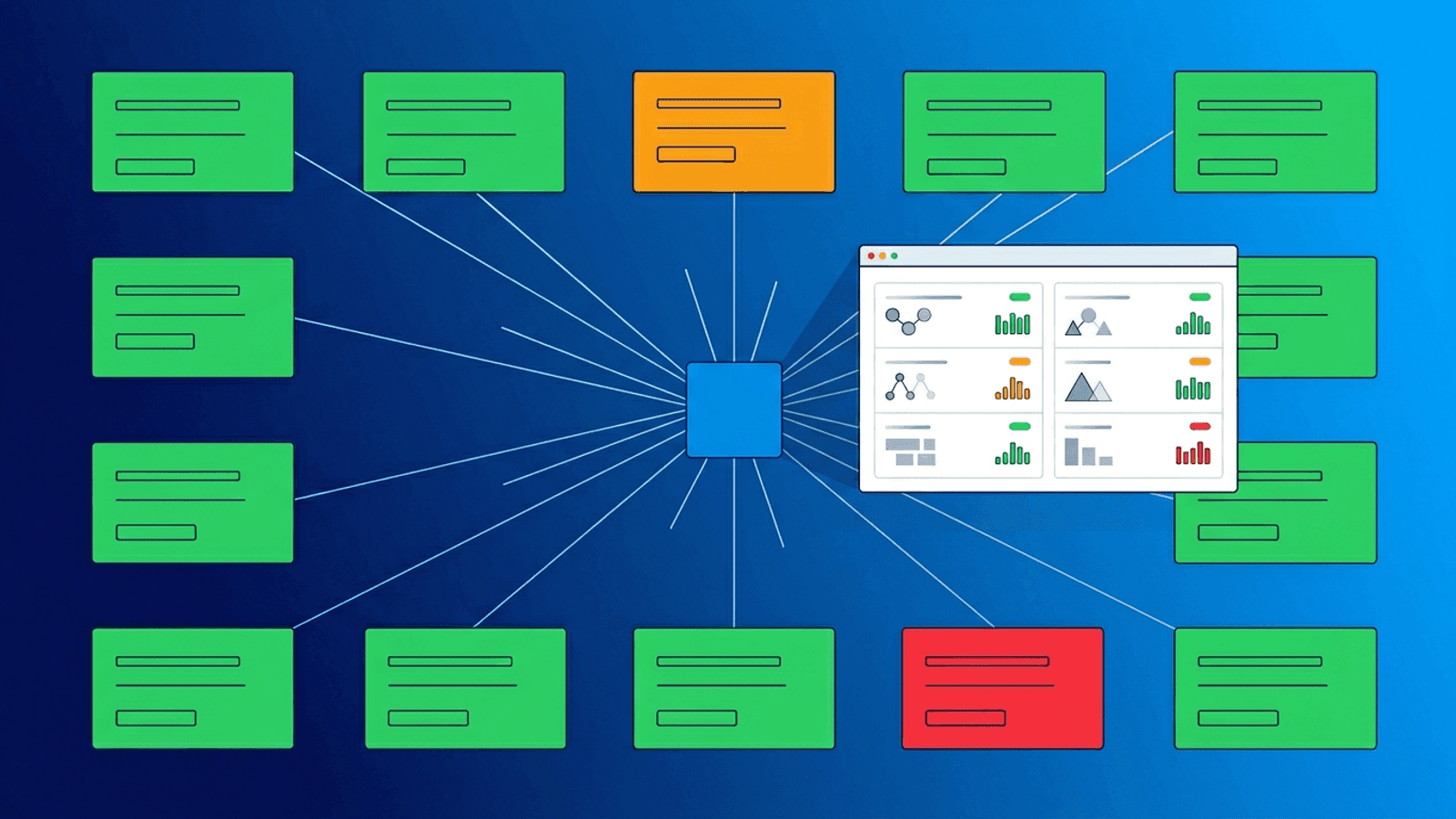

4) Noisy Neighbor Detection

Detect when one tenant's behavior degrades service for others:

- Track p95 latency per tenant — compare against global baseline

- Monitor per-tenant error rates — flag tenants with significantly higher error ratios

- Watch for correlation — if tenant A's request volume spikes and tenant B's latency increases, you have a noisy neighbor

Automated detection is ideal. At minimum, have dashboards that make cross-tenant correlation visible during incidents.

5) Background Job Health per Tenant

Many SaaS platforms process tenant events asynchronously:

- Data imports

- Report generation

- Webhook delivery

- Email notifications

- Billing calculations

Monitor job completion per tenant. A global "jobs are running" heartbeat misses tenant-specific failures like:

- Tenant's webhook endpoint unreachable, causing retry backlog

- Tenant's data import stuck on malformed data

- Tenant's report generation exceeding timeout

SLOs per Customer Tier

Not all tenants need the same reliability target:

| Tier | Availability SLO | Latency Target | Alert Priority |

|---|---|---|---|

| Free | 99.5% | p95 < 1000ms | Low (business hours) |

| Pro | 99.9% | p95 < 500ms | High (rapid response) |

| Enterprise | 99.95% | p95 < 300ms | Critical (wake-up) |

Define these targets explicitly, then monitor and alert against them:

- Free tier — Weekly review of aggregate metrics

- Pro tier — Alerting on sustained degradation

- Enterprise tier — Immediate alerts with dedicated escalation

This prevents over-alerting on low-impact free-tier issues while ensuring enterprise problems get instant attention.

Alerting Strategy for Multi-Tenant Platforms

Tier-based routing

Route alerts based on affected tenant tier:

- Enterprise: Page on-call immediately, notify account manager

- Pro: Alert engineering team, respond within SLA

- Free: Log and review in next business-hours triage

Scope-based escalation

Determine blast radius before escalating:

- Single tenant — Investigate tenant-specific cause first

- Multiple tenants on same shard/region — Likely infrastructure issue, escalate

- All tenants — Global incident, full response

Context in alerts

Include tenant context in every alert:

- Tenant ID and name

- Tier level

- Affected region/shard

- Current error rate and latency vs baseline

- Number of co-located tenants potentially affected

Without this context, responders waste time determining scope.

Status Pages per Customer

Enterprise SaaS customers increasingly expect dedicated or filtered status pages.

Options:

- Public global status page — Shows platform-wide incidents

- Tier-filtered status page — Shows incidents relevant to the customer's tier

- Per-customer status page — Shows only components the customer uses

- Private status page — Password-protected, shows customer-specific SLA metrics

At minimum, offer a global status page. For enterprise customers, consider per-customer views that build trust and reduce support tickets during incidents.

Practical Implementation Checklist

Week 1: Foundation

- Add a tenant-aware health endpoint

- Create synthetic checks for each customer tier

- Set up global + per-tier uptime monitoring

- Configure tier-based alert routing

Week 2: Isolation

- Add isolation boundary metrics (DB pool, queue depth, rate limits)

- Implement noisy-neighbor detection alerts

- Create per-tenant job health monitoring

- Set per-tier SLO targets

Week 3: Communication

- Launch a public status page

- Create tier-filtered views for enterprise customers

- Document incident communication workflows per tier

- Set up automatic status updates for monitored components

How Webalert Helps

Webalert helps multi-tenant SaaS teams monitor per-customer reliability:

- HTTP/HTTPS checks for tenant-aware health endpoints from multiple regions

- Content validation to verify per-tenant response correctness

- Response time monitoring with per-endpoint latency tracking

- Heartbeat monitoring for tenant-specific background jobs and processors

- Multi-channel alerts — Email, SMS, Slack, Discord, Teams, webhooks

- On-call scheduling — Route enterprise-tier alerts to the right responder

- Status pages — Public and private status pages for customer communication

- Multiple monitors per service — Separate checks per tier, region, or customer segment

See features and pricing for details.

Summary

- Global uptime checks hide tenant-specific failures in multi-tenant SaaS.

- Monitor per-tenant health, per-tier synthetic flows, and isolation boundaries.

- Detect noisy neighbors by correlating per-tenant latency with resource consumption.

- Define SLOs per tier and alert accordingly — enterprise gets immediate response, free tier gets batched review.

- Include tenant context (ID, tier, region, blast radius) in every alert.

- Offer status pages that match customer expectations by tier.

Multi-tenant reliability is not about one uptime number. It is about ensuring every customer segment gets the experience they are paying for.